My Intel NUC 11 Specs & Setup

- Intel® NUC 11 Performance barebones kit NUC11PAHi7 Core i7-1165G7 with Intel XE Graphics and 96 execution units.

- Samsung 1TB PCIe 4.0 NVMe 980 PRO SSD.

- Crucial 64GB (32×2) DDR4 3200Mhz SODIMM RAM.

- Dell U3419W Ultrawide Monitor with a VESA mounting bracket attached.

The initial install using the Pop!_OS 20.04 LTS image was a breeze. This OS is based on based on Ubuntu 20.04 LTS which folks like Dell and Lenovo already use with their Tiger Lake laptops.

Booting the NUC from USB can be done by asking the BIOS (F2) to disable secure boot; enabling Boot from USB; and then either using the boot selection menu (F7) or by setting the BIOS to boot from USB first. The BIOS itself already has an update available – “PATGL357” – presumably named to honour Pat Gelsinger’s recent return to Intel as their new CEO 😀

After that update, setting up the rest of the desktop environment to your liking is mostly a case of using the Ubuntu package manager (apt), or Snap, or Flatpak, or HomeBrew, or Gnome Extensions.

The PC itself is so small I’ve mounted it on the back of my monitor using a VESA plate to give my desk a cleaner look. Mounting the VESA plate to the back of the Dell monitor was a bit of a challenge. The curved plastic cowl on the back of the monitor is proud of the VESA screw plate, so I had to use some spare risers I had from other TV mounts to get it to fit. Dell could improve on this I feel.

Compared to my older PC’s, the #NUCPop is very fast. I had a Macbook Pro 2017 and a Lenovo E550 laptop. The NUC easily blows them out of the water.

What works…

Almost everything 🙂

Video and audio via USB-C DP and HDMI works fine, although it will occasionally boot at a lower resolution than the monitor is capable of for no apparent reason. Turning off the monitor for a few seconds and then back on seems to do the trick.

USB devices have all worked so far, including external HDD’s, webcam, microphones, USB thumb drives, USB hubs etc.

Pop OS too seems stable. I’ve not yet had any unexplained outages or crashes.

What doesn’t (yet) work…

The headphone jack 😦

So far I’ve had limited success getting the NUC’s built in headphone jack to work with headphones, headsets, or microphones.

I think this is down to a couple of issues. The first is to do with how the hardware (Realtek ALC256) detects the presence of a plug in the headphone socket. The second is how the kernel treats the HD Audio devices.

The first issue (detecting the jack plug) is helped by the addition of a /etc/udev/rules.d/51-Realtek-Jackdetect.rules file (contents shown below). In pulse Audio, this brings the built in audio out of the ‘unavailable’ state in PulseAudioControl but this doesn’t fully solve the problem.

ACTION=="add", SUBSYSTEM=="sound", ATTRS{chip_name}=="ALC256", ATTR{hints}="jack_detect=false"

ACTION=="add", SUBSYSTEM=="sound", ATTRS{chip_name}=="ALC256", ATTR{reconfig}="1"The second issue (actually using the jack) is helped a little by some additional ‘options’ to /etc/modprobe.d/alsa-base.conf (as shown below). With this edit it’s possible to have a regular wired headset (I have a basic Senheiser) work for both sound and microphone recording.

options snd-hda-intel model=dell-headset-multi

Having made a recording with a headset using this configuration, I’m confident the hardware is at least ‘capable’ of recording and playing something, even if the current setup gets in the way.

But, this solution isn’t complete. Neither my regular wired headphones (basic Gummy plus), or my wired lavallier mic (Synco Lav-S6 condenser) works with this setup.

The complete solution could just be down to finding the right combination of settings from kernel.org, or it could be a general bug or incompatibility that isn’t yet fixed (possibly in the kernel or drivers or settings??).

As a workaround, I switched to my backup devices, which are either the built in mic on my Logitech c930 webcam, or my Amazon Basics USB travel microphone. Both of these work just fine, but I really would like to use my Lavallier mic – it’s so much richer and warmer than the slightly robotic Logitech or the distant Amazon.

For now I’ve backed out both of the changes detailed above until I have time to revisit the issue. They complicate the audio setup in the desktop settings app and in Pulse Audio Control. Ho hum.

Other strangeness…

Display port over USB-C is great idea that seems to be badly implemented. In theory, your display can take an audio and video signal and provide USB hub features all though one cable. This means the monitor can act as a KVM switch, automatically plugging your input devices into the PC where the display signal is coming from.

In practice Display Port over USB-C is very unreliable. Even using the USB-C cable supplied by DELL, the system has a mind of its own. Sometimes it will boot to a blank and the screen powers off. Other times it boots to the wrong screen resolution. I’m unsure where the responsibility lies, but it’s fair to say that USB is still a hot mess. The same thing happens with Apple MacBooks with this display.

I got so frustrated with it, I’ve switched back to regular HDMI with USB 3 coming back from the monitor via a regular ‘non-display port’ capable USB-C to USB-A cable. This does use up a precious USB-A port though 😦

Another strange issue I had was with the NUC LED Power button ‘breathing’ while the NUC was fully powered on and running. This only happened once, and honestly, I think the NUC just got confused into thinking that the powered on state was actually the sleep state somehow. Shutting down the NUC, yanking out the power cord, and leaving it out for 30 seconds before restarting again restored the Power Button LED back to normal operation.

What else did I try?

Other NUC’s in this family are listed as supporting Red Hat Linux. I presume they mean RHEL, which isn’t totally free but shares much in common with Fedora. Fedora is a ‘rolling’ release, meaning that you get the very latest internals where possible. I booted a live USB with Fedora 33 Workstation, but the audio issues with the built in headphone jack were still present.

I may give Intel’s own Clear Linux a shot, to see if that fairs any better. You never know right? As it’s from Intel, maybe they fixed these issues already. I may also try Pop!_OS 20.10 and Ubuntu 20.10 just to see if their more recent inclusions solve the issue.

What does the ‘NUCPop’ do well?

Pretty much everything!

Glimpse, Inkscape, and other essential software boots really quickly, usually in under a second or two. Even IntelliJ IDEA takes just a few seconds, and on my old laptop that was a pretty slow starter. OBS studio will even record and stream at Full HD resolution at 30 fps. The only game I’ve tried so far is TuxCart and that worked fine, but to be fair, it also works fine on my Raspberry Pi 400!

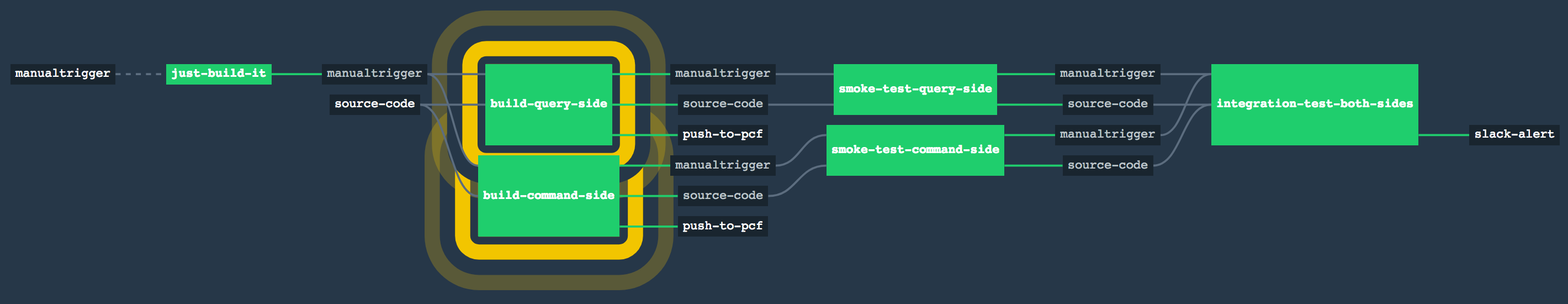

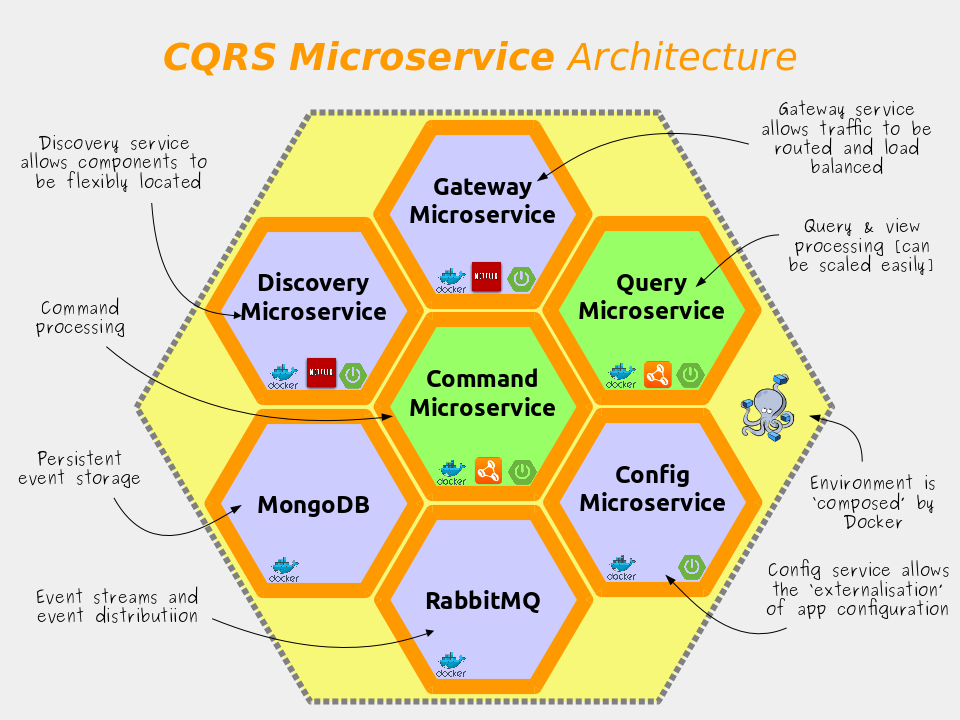

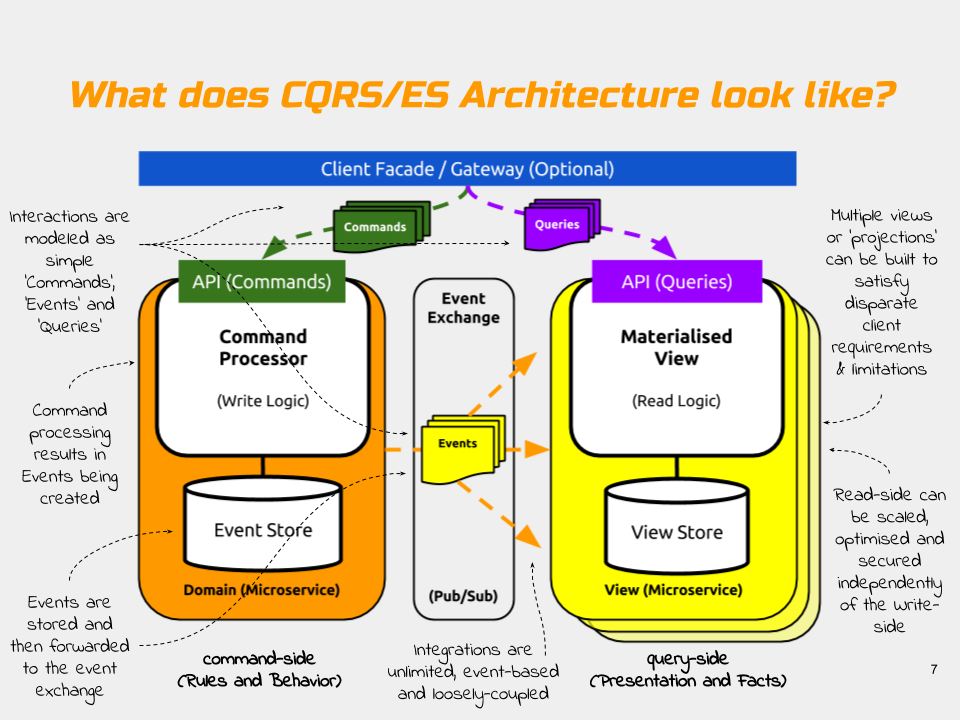

For my cloud development work, I can run Docker, Kubernetes, IntelliJ, Slack, and browsers, compilers, etc, and still have plenty of memory and CPU left for other tasks.

One of the most punishing development tasks do as part of my day job is building GraalVM Native Images for Spring applications. On my old laptop, this would take 7-10 minutes, maxing out the processor and memory for the duration (my laptop had 16GB RAM and a Gen 5 Core i7 CPU with 4 threads).

The NUC can complete the same task in just 2:50s (with warm image download caches). And, although the CPU was still totally maxed out, the NUC still had 40GB of RAM to play with. With that in mind, I may have over specified the RAM. I could probably have done fine with 32GB instead of the full 64GB.

Another task I do that can take a while is video rendering. A 25 minute show I recently produced used to take 2hrs to render at Full HD, 30fps on my old laptop. Now, on the NUC, the same project can be fully rendered with exactly the same settings in just 22 minutes.

Got questions or solutions?

Post them in the comments below, or send me a message on Twitter @benbravo73.

UPDATE 10-03-2020: It looks like there might be a similar audio headset problem with the older NUC 10. A fix (quirk) has been added to the Linux 5.11 kernel which might offer hope of a fix. There’s also a similar patch for Acer Swift.

UPDATE 18-02-2020: I spun up Live USB distributions for Ubuntu 20.10 and Clear Linux, but neither fixed the headset jack issues yet.

UPDATE 20-02-2020: System76 have advised me to start a dialogue with them on chat.pop-os.org as they may be able to help figure out the audio issue. So I’ll give that a try.

You must be logged in to post a comment.